Labs: Using Web AR to augment the Easter egg hunt!

Photo by Markus Spiske on Unsplash

Photo by Markus Spiske on Unsplash

Web-AR provides augmented reality experiences through the browser to everyone with a smartphone. With no app download needed, the experience is highly accessible. We decided to try it out and created an augmented Easter egg hunt experience for a local community.

A-Frame, a popular framework for creating web-based VR-experiences, just added support for AR.js and with that, it now provides both marker-based and location-based augmented reality. The latter is what we used in this project.

Location-based AR has been around for some time now and is in an essence about overlaying graphics on the camera view without the need for markers or other means of understanding the environment than GPS position and device orientation. It can and has been used for marketing, navigation, and mobile games such as Pokemon Go.

Goal

We started this lab project a couple of days before Easter Holiday so we thought that augmenting the classic Easter egg hunt would be fun to explore and good a test-bed for A-Frame. We aimed to provide our Easter egg hunters with a novel experience and a fun way to find the hidden eggs for everyone in the community.

Concept

First of all, physical eggs where hidden, we wanted to keep the core experience true to its origins.

A small Web-app was created that provided two ways to find the hidden locations. A Google maps view and a location-based AR view. GPS locations provided roughly the right spot but some amount of searching was required of course.

AR is cool in the way it let us interact through physically moving around instead of clicking on the screen, with that said for mobile AR we do see on-screen controls as valuable and an intuitive way for users to interact. Here we decided to limit touch interactions and navigate between the map view and the AR-view based on how the device is held. Looking down shows the map, looking up shows the camera with the AR overlays.

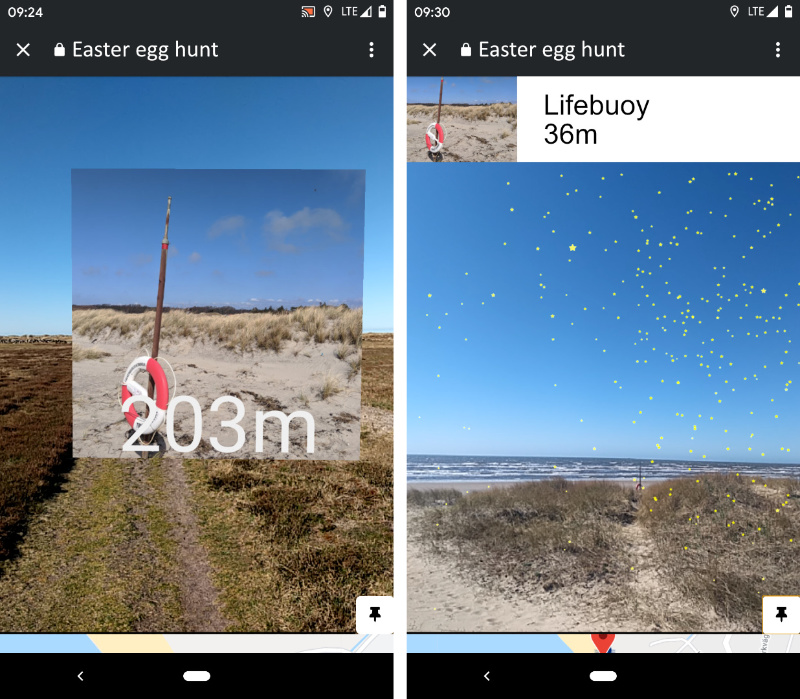

In the AR-view we show the distance to the locations and an image of each spot. These scales automatically and are sorted by A-Frame without any code needed. Pretty neat.

When getting close enough to an easter egg the AR-view changes to indicate the search area and the name and the remaining distance is pinned to the top of the screen to keep this visible when moving around.

Finding the physical egg was trigger enough to move on for the egg-hunters so no interaction was added to close a location, just moving on will do. Visited locations are hidden when moving away a certain distance.

Challenges

Precision hit us twice. There’s some feedback available about GPS quality that we didn’t have time to look into, but this is recommended to provide a more solid experience. Locations tend to move around, especially rotate around the user, before the GPS quality settles.

GPS positions are not accurate enough to place a 3d model at a specific location and maybe, more importantly, the spatial tracking available through A-Frame and AR.js is not solid enough to keep an object from not moving around after being placed. We “solved” this by adding a particle system to highlight the actual position when getting closer. The moving particles somewhat distract the user from the fact that the whole system is moving around a bit.

UI changes based on the distance to location

UI changes based on the distance to location

Conclusion

This was a very quick hack to get it into the hands of the eager egg-hunters but the feedback was very positive despite being quite rough on the edges. Not having to download an app and jumping straight into the experience from the webpage they found the event on was highly appreciated.

Given more time we would like to improve most visual aspects but more importantly, look into other ways to improve the AR experience close to the locations. The Web-XR specification available on Chrome in beta provides improves spatial tracking so that will be exciting to play around with next time!